Chang Xingyu, Alex Wing Cheung TSE

u3575230@connect.hku.hk, alextse@connect.hku.hk

The University of Hong Kong

Abstract: Since the middle of the last century, the research on the application of intelligent tutoring systems in English teaching has begun, and its development in English writing has made rapid progress and many automated writing evaluation systems (AWE) are created according to the principles of intelligent tutoring system (Sleeman & Brown, 1982). However, most of the research focuses on the consistency f the AWE system with human raters and how to use the system to decrease the workload of teachers, but little research has been done on improving students' English writing ability. The "Pigai" system can be regarded as the most widely used AWE system in mainland China, its role in improving students' English writing ability should be tested. In this study, we took the role of school technology coordinator to test the effectiveness of this AWE system and try to provide useful suggestions for its improvement. Experimental design through quantitative approach was adopted. The participants were the 100 second-year English major students in a public university in mainland China. This paper reports two research questions of the study: 1) Does the AWE system significantly improve students’ English writing grades compared with traditional teacher evaluation? 2) Are there differences in students' learning attitudes towards the AWE system and that towards traditional teacher evaluation? After four weeks’ intervention, this study found that both the AWE system and traditional teacher evaluation can improve students’ English writing ability, however, the AWE system could improve better, and students held a very positive attitude towards this system.

Keywords: intelligent tutoring system, automated writing evaluation system, English writing, school technology coordinator

1. Introduction: Addressing problems in applying automated writing evaluation (AWE) system for students’ English writing to highlight the significance and originality of this study

1.1. Significance

Nowadays, overburdening is the normal work situation of teachers (Liu, 2012), and many teachers do not have enough time to carefully give detailed revision suggestions for every writing problem for students, most teachers will only point out the broad aspects, such as structure problem, text problem, coherence and cohesion problem, but not each detailed aspects of grammar problems. It has been widely accepted by schools in many countries and regions to apply the AWE system to imitate the real tutors and automatically grade students' English compositions (Palermo, Thomson, 2018). Besides, most AWE systems have been supported by favorable validity evidence based on the consistency and agreement between the automated system and human raters (Enright & Quinlan, 2010; Keith, 2003; Vantage Learning, 2006; Weigle, 2010). Thus, many supporters think that the AWE system can play the role of tutor to reduce the workload of teachers (Warschauer, Grimes, 2008). However, there are few studies on whether the system can benefit students' English writing grades (Ericsson, 2006). It is good to keep the consistency of human evaluation and reduce the work pressure of teachers, but the more important thing is whether students can improve their grades through this system. The research of the effectiveness of "Pigai" in improving students’ English writing ability should be tested because it can be regarded as the most widely used English composition evaluation system in Mainland China (Hou, 2020), teachers who are already using the system or who are still on the sidelines may want to know more about it.

With the fast development of information and communication technology (ICT) in school education, numerous organizational structures have been designed for dealing with technology functions within schools. One of the most common ones in smaller schools is the creation of a single position of technology coordinators (Strudler, 1995) to serve as school technicians and being responsible for the maintenance of ICT, they are the leaders of educational technology innovation (Evans-Andris). In this study, we took the role of school technology coordinator to test this AWE system, hoping to provide suggestions on the utility and future development of this system.

1.2. Originality

|

Search Engine |

Key Words |

Results Number |

Time Range |

Search Date |

|

|

Google Scholar |

“Pigai” and “English Writing” and “English major” |

34 |

2010-2015 |

2021/05/05 |

|

|

Google Scholar |

“Pigai” and “English Writing” and “English major” |

157 |

2015- |

2021/05/05 |

|

|

Increase number in recent 5 years: 123 |

|

||||

|

Google Scholar |

“批改网” “英语写作” “英语专业” |

107 |

2010-2015 |

2021/05/05 |

|

|

Google Scholar |

“批改网” “英语写作” “英语专业” |

373 |

2015- |

2021/05/05 |

|

|

Increase number in recent 5 years: 266 |

|

||||

(Figure 1)

We searched the keywords in Figure 1 and found that the researches in “Pigai” and “English Writing” and “English major” are extremely limited and no more than 200 articles in recent 10 years, besides the increased number within recent 5 years are only 123 articles. Since the target users of “Pigai” system are the Chinese learners (Pigai R & D Team, 2018), so to increase the reliability of the data, we also searched for the same keywords in the Chinese version and found that the total number of researches in recent ten years is 480, still not big. Thus, the problem that lacking comprehensive research on the effectiveness of this AWE system to English major students’ English writing is very obvious.

2. Working principles of the AWE system

“Pigai” system works according to the working principle of the intelligent tutoring system (ITS), which provides students the opportunities to practice writing by receiving prompt individual feedback without physical teachers' guidance (He & Li, 2005).

2.1 What is ITS?

Since many AWE systems are created according to the principles of ITS, so the concept of ITS is discussed first. ITS is a very broad term, which includes all computer programs integrated with intelligence and used in improving learning (Freedman et al., 2000). The ITS also refers to a software system that stores the related subject and teaching knowledge in a specific area and can carry out the individualized teaching for students, according to the achievements of students, the corresponding teaching strategies are selected, and the human experts teaching is simulated (He & Li, 2005).

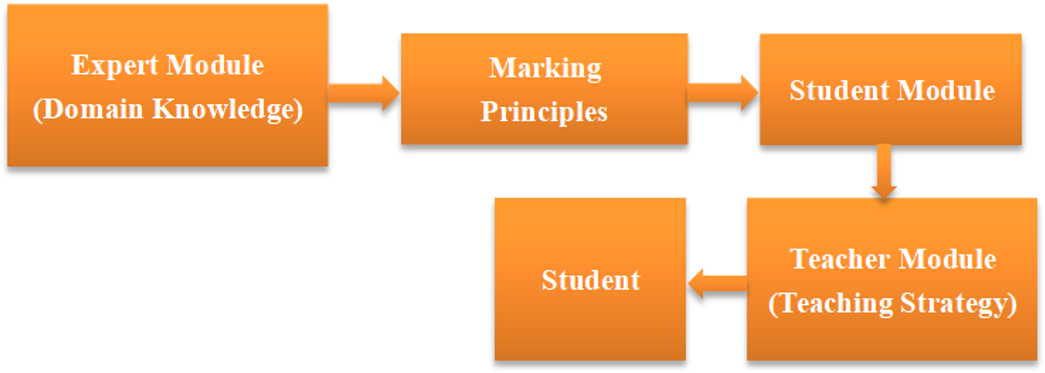

A complete ITS is generally composed of three basic modules (Psotka et al., 1988) and is shown in the figure below.

|

Expert module (domain knowledge) |

the professional knowledge that the system tries to transport to students, represents the intelligence of experts. |

|

Student module (teaching strategy) |

what students already know and what they don't know and their cognitive characteristics, which represents students' intelligence. |

|

Teacher module |

targeted teaching strategies of teachers, it represents teachers' intelligence. |

(Figure 2)

Figure 2 shows the detailed explanation of each module in ITS.

(Figure 3, Psotka et al., 1988)

Figure 3 shows that in the operation of ITS, firstly, information resources are extracted from the expert module to form a marking principles pack, and then the information of the student module is retrieved and immediately transmitted to the teacher module. The teacher module uses the target resources of the expert module to evaluate the students' work and finally gives the evaluation feedback to the student (Psotka et al., 1988).

The research of ITS in writing has made rapid progress, the system can intelligently correct the compositions submitted by students through multiple dimensions for judging English writing, and point out the problems existing in students' compositions. Nowadays, many automatic writing evaluation (AWE) systems have been created according to the working principles of ITS systems, “Pigai” is one of the derivations (Yang & Dai, 2015).

2.2 How “Pigai” system works?

The “Pigai” takes students’ compositions as a learner corpus, the score of each composition is marked according to 192 dimensions (such as lexicology, syntax, fixed collocation). By comparing the students' compositions with the standard corpus to transform the “whole comments”, “score” and “sentences review”. The whole process is like a teacher correcting students' compositions from multiple dimensions to give overall feedback (Pigai R & D Team, 2018). The general structure of “Pigai” is shown in Figure 4:

(Figure 4)

In Figure 4, comparing with ITS, the corresponding parts between “Pigai” system and ITS have been marked. After the students submit their compositions, the system will automatically match the students' compositions with the targeted language "corpus" of the expert module through 192 dimensions "marking principles” to give the corresponding composition scores, comments and sentences review (Pigai R & D Team, 2018).

(Figure 5)

(Figure 6)

Figure 5 and Figure 6 are the real pages of "Pigai", when students submit their papers to "Pigai", it will show the "whole comments", "final score" and "sentences review" for individual papers.

3. Research design: Experimental design through quantitative approach

Experimental design through quantitative approach was adopted to investigate whether the AWE system contributes to improving the students' English writing grades and students' attitudes towards it. The overview of the research design is illustrated in Figure 7.

(Figure 7)

*Pretest and post-test: Writing tasks (pretest and post-test have different writing topics but with the same writing requirements and difficulties).

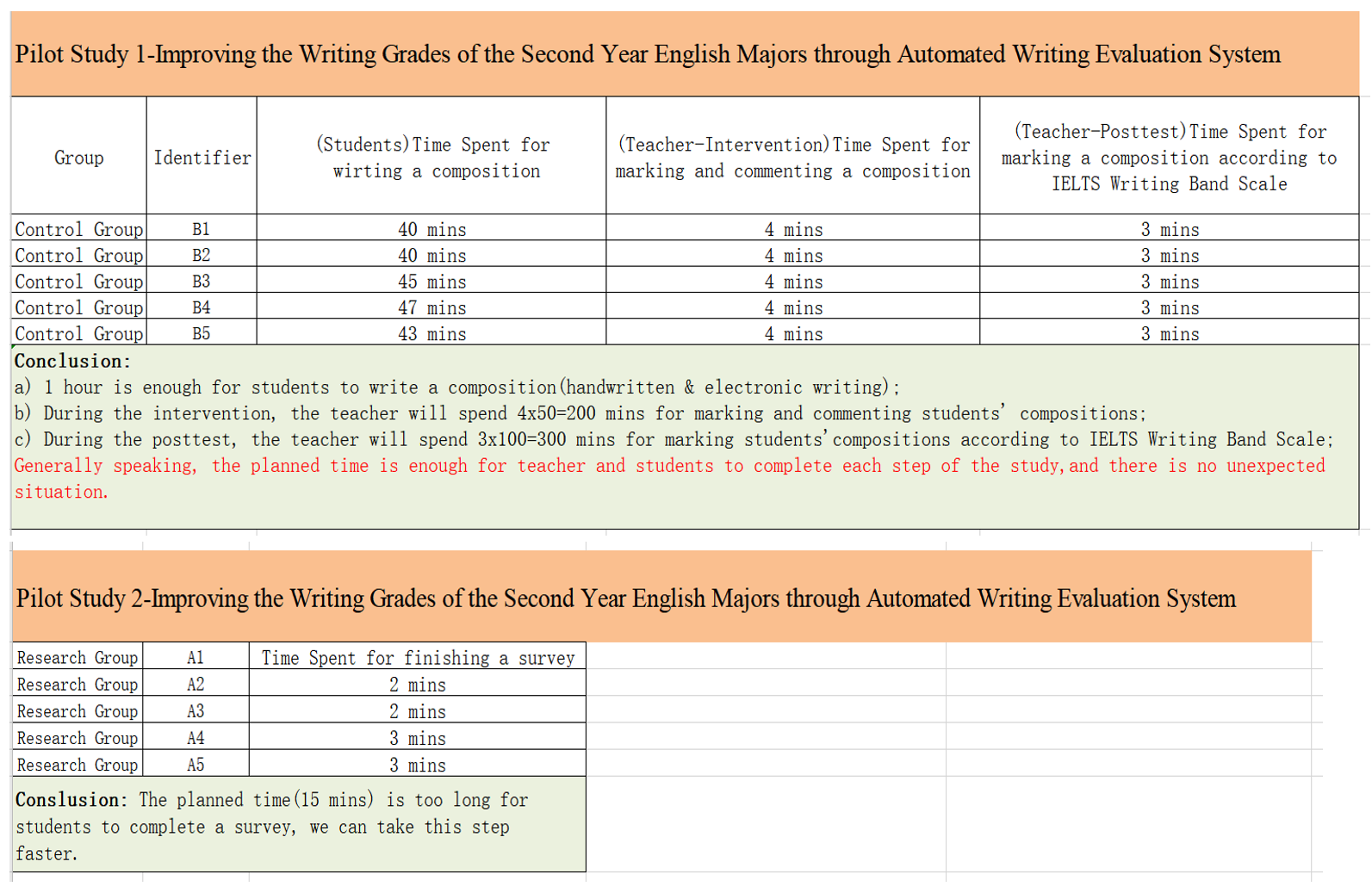

Before conducting the pretest and students' attitude survey, there were two pilot studies had been conducted to test the time it takes participants to write a composition, the teacher to mark a composition, and the time it takes participants to do the survey, to adjust the schedule of the study. Appendix One shows the results of two pilot studies.

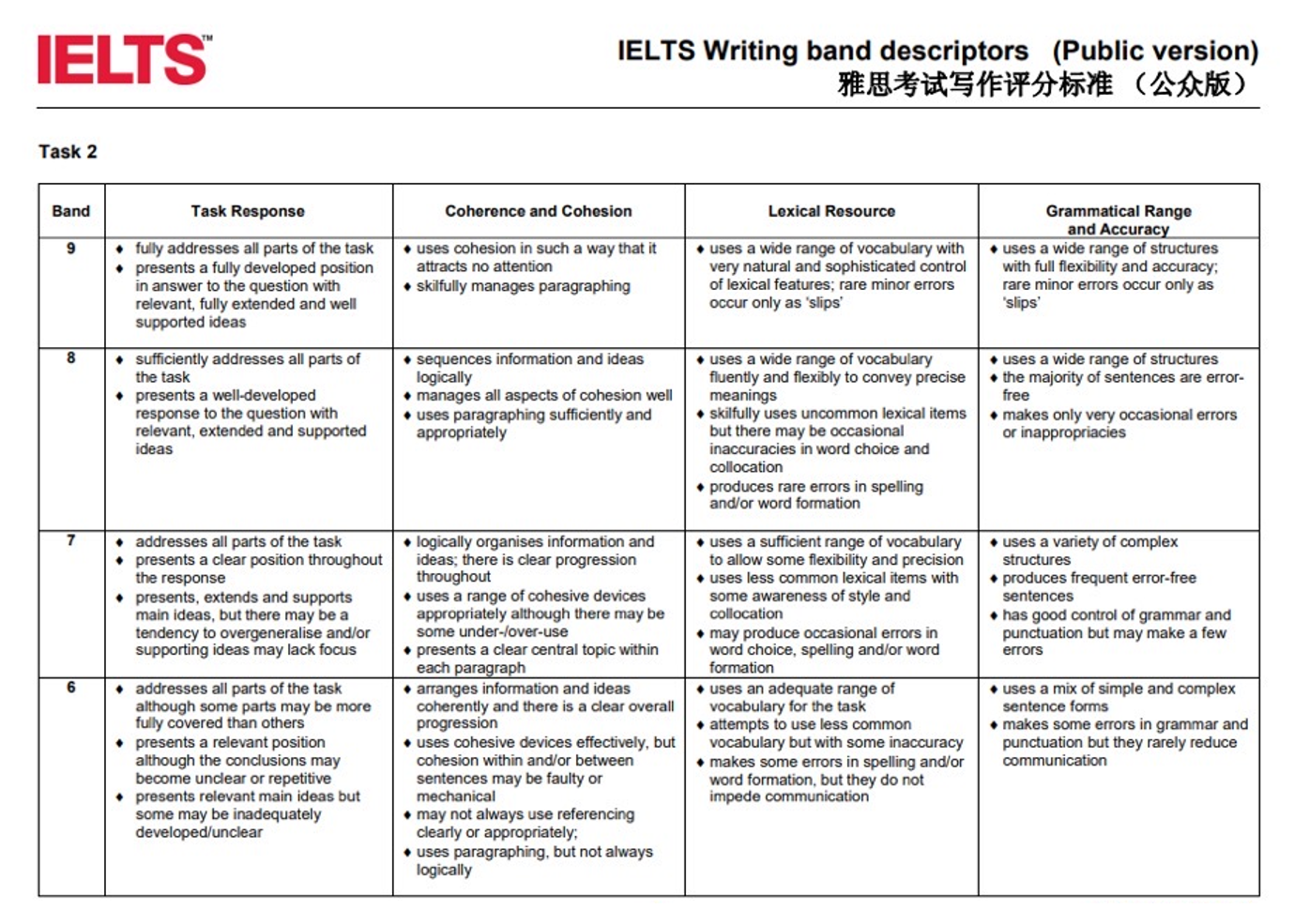

Pretest: All students have given the same writing topic, before writing, the IELTS writing band descriptions scale (IELTS British Council, 2021) was explained to students, then their writings were marked according to the principles in the scale. Appendix Two shows the most updated IELTS writing band descriptions scale.

Intervention: 100 students were randomly assigned to two treatments, each group includes 50 students, the research group took the AWE system as an evaluation method and the control group took the teacher evaluation method. To protect privacy, every student has a code, students in the research group coded from R1-R50, students in the control group coded from C1-C50. The intervention period was four weeks, 8 writing tasks were given to both two groups, all writing requirements and difficulties for two groups were the same, the marking principles of “Pigai” is shown in Figure 4, and teachers followed the marking principles of the IELTS scale.

Post-test: after four weeks’ intervention, all students were required to do the same thing as the pretest before, however, a new writing topic was given.

Students’ attitude survey: after the post-test, a survey about students’ learning attitudes towards these two treatments were given to students. The survey included 12 Likert-type questions, their scores were rated strongly agree (4 scores) to strongly disagree (0 score).

3.1 Quantitative approach: True-experimental design

To precisely evaluate whether the treatment (intervention of AWE system) leads to the change of the variable (students’ English writing grades), this study adopted a quantitative approach, in which participants’ grades under one condition are compared to another group of participants’ grades under another condition by quantifying the collection and analysis of data (Becker et al., 2012). As illustrated in Figure 7, quantitative approach was adopted in this study to have an investigation of an overview about whether: 1) Does the AWE system significantly improve students’ English writing grades compared with traditional teacher evaluation; and 2) Are there differences in students’ learning attitude towards the AWE system and that towards traditional teacher evaluation. Besides, the experimental design was applied since there were two experimental groups, one was the research group and the other was the control group and we intentionally controlled and determined the conditions of events (Cohen, Manion, Morrison, 2013). The participants of the study were randomly assigned into two groups, so due to the nature of students’ assignment method, it was a true experimental design, since the most important and obvious difference between the quasi-experiment and the true experiment is that, the groups of the true experiment have been constituted by random selection other than by means (Cohen, Manion, Morrison, 2013).

3.2 Alignment of data collection and data analysis

To answer the research question about whether learning through the AWE system contributes to improving students’ English writing grades, the pretest, post-test, and students’ attitude survey were originally designed and then data was collected and analyzed cautiously.

(Figure 8)

(Figure 9)

Figure 8 and Figure 9 show the alignment among research questions, instruments, participants, data collection time, and data analysis methods of this study.

4. Results and discussion: The AWE system was effective in students’ English Writing

Through the study of exploring two research questions to test the effectiveness of the AWE system for students’ English writing: 1) Does the AWE system significantly improve students’ English writing grades compared with traditional teacher evaluation; and 2) Are there differences in students’ learning attitude towards the AWE system and that towards traditional teacher evaluation. The finding is that this AWE system is useful for improving students' English writing grades, and students' attitude towards it is very positive.

4.1 Impact of the AWE system on students’ English writing

To answer the first research question: Firstly, a Chi-squared test was conducted to testing if there is a significant difference for gender: P=0.779>0.05, so there is no significant difference for the gender in the two groups. Secondly, an Independent Sample T-test was conducted to testing if there is a significant difference for age: p=0.866>0.05, so there is no significant difference for the age in the two groups. Thirdly, an Independent Sample T-test for testing that if there is a significant difference for students' English writing grades in pretest: P=0.659>0.05, so there is no significant difference for students’ English writing grades in pretest in two groups. From these three data analysis methods, it can be shown that the random sampling process was successful and there were fewer interference factors of the study.

|

Group |

Research Group(M ± SD) (n=50) |

Control Group(M ± SD) (n=50) |

F/χ²/t value |

P value |

|

Gender (Male/Female) |

8/42 |

7/43 |

0.078 |

0.779 |

|

Age |

19.4±0.606 |

19.42±0.575 |

-0.169 |

0.866 |

|

Pretest |

6.73±0.564 |

6.78±0.564 |

-0.443 |

0.659 |

(Figure 10)

Figure 10 shows the descriptive statistic of these three analysis methods, from the figure we can realize that there are no significant differences among gender, age, and pretest grades for the two groups.

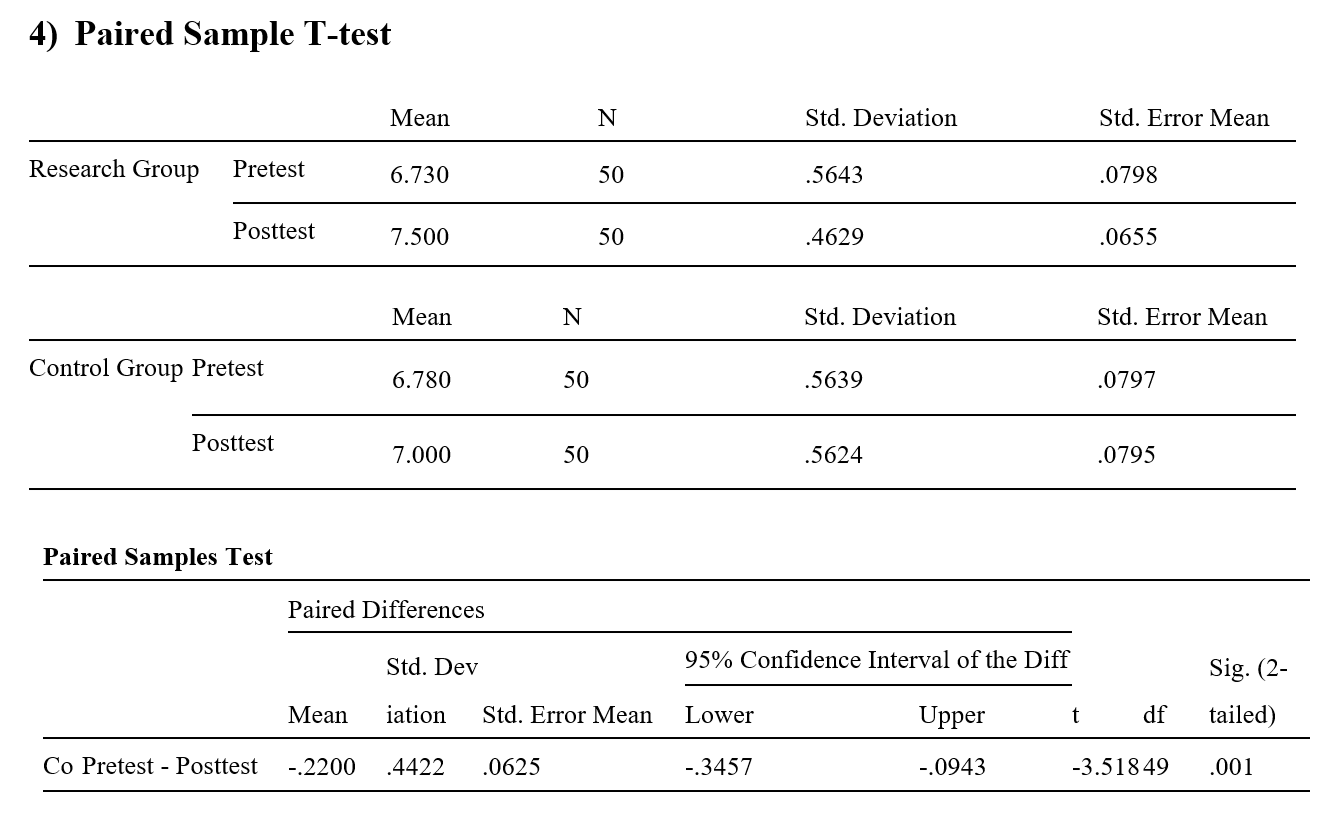

Fourthly, Paired Sample T-test was conducted for testing the significant difference for the pretest and post-test in both the research group and control group. In the research group: P=0.000<0.05, so there is a significant difference between the pretest and post-test, M pretest(6.73) < M post-test(7.50), which means after the AWE intervention, students' English writing grades have been obviously improved. In the control group: P=0.001<0.05, so there is also a significant difference between the pretest and post-test, M pretest(6.78) < M post-test(7.00), which means that after teacher evaluation, students' English writing grades have been slightly improved. Fifthly, ANCOVA for testing the significant difference for post-test grades between two groups, set intervention method as a fixed variable, set students' grades in post-test as the dependent variable, set pretest grades of two groups as covariant. P=0.000<0.05, so there is a significant difference between the post-test grades of two groups, M research group(7.50) > M control group(7.00), so the AWE system can improve students' English writing grades more than teacher evaluation.

|

Group |

Pretest |

Post-test |

F/χ²/t value |

P-value |

|

Paired Sample T-test |

|

|

|

|

|

Research Group |

6.73±0.564 |

7.50±0.463 |

-12.993

|

0.000 |

|

Control Group |

6.78±0.563 |

7.00±0.563 |

0.001 |

|

|

ANCOVA |

|

|

|

|

|

Research Group |

|

7.5±0.463 |

85.666 |

0.000 |

|

Control Group |

|

7.0±0.562 |

(Figure 11)

Figure 11 shows the descriptive statistic of these two analysis methods.

From the above data analysis, we can obtain the answer for the first research question: Both the AWE system and teacher evaluation can improve students’ English writing, however, the AWE system can improve better. Appendix Three shows the overall data tables obtained from the SPSS for research question 1. Since the intervention time is limited (four weeks), it is uncertain that whether students can still improve their English writing grades through this system after the short-term intervention. Thus, the students’ attitudes towards the system are very important. If they hold a positive attitude, then it can increase the reliability of the research results. If they hold a negative attitude, then the research results need to be discussed, so the second research question which is about the students’ attitude survey was conducted to check their attitude.

4.2 Students Attitudes towards the AWE system and teacher evaluation

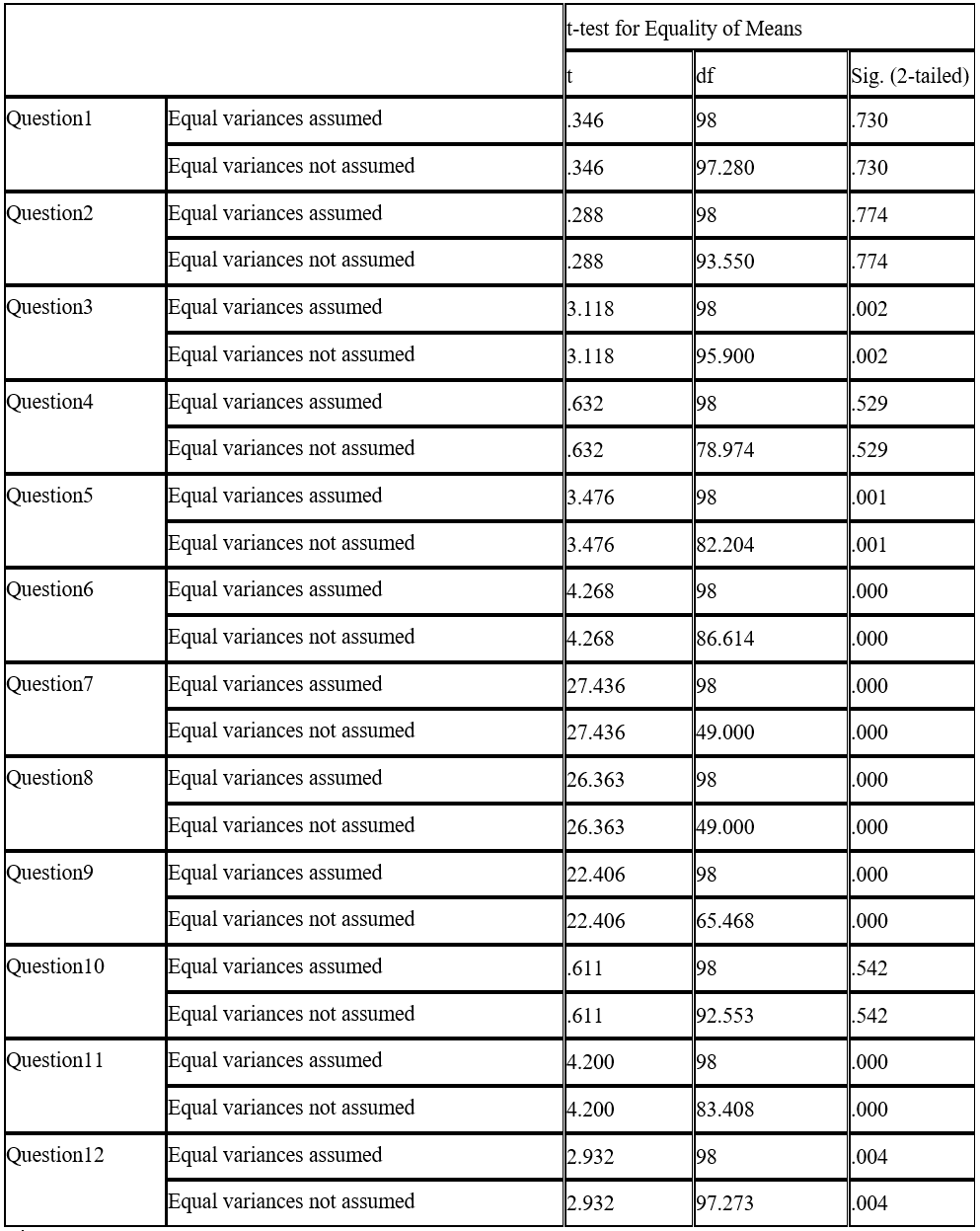

To answer the second research question: A survey with 12 Likert-type questions was given to all 100 participants. Independent Sample T-test for testing among the 12 items, what items influence students’ attitude towards two evaluation methods. Figure 12 shows the finding is that there are eight items(Question3.5.6.7.8.9.11.12) that show a significant difference between the two groups. Figure 13 shows the descriptive statistic.

(Figure 12)

|

Items |

Research Group(M ± SD) (n=50) |

Control Group(M ± SD) (n=50) |

F/χ²/t value |

P-value |

|

|

Question3 |

3.88±0.92 |

3.26±1.07 |

3.118 |

0.002 |

|

|

Question5 |

3.90±0.65 |

3.30±1.04 |

3.476 |

0.001 |

|

|

Question6 |

4.70±0.51 |

4.16±0.74 |

4.268 |

0.00 |

|

|

Question7 |

5.00±0.00 |

2.28±0.70 |

27.436 |

0.00 |

|

|

Question8 |

5.00±0.00 |

2.28±0.73 |

26.363 |

0.00 |

|

|

Question9 |

4.90±0.30 |

2.40±0.73 |

22.406 |

0.00 |

|

|

Question11 |

4.38±0.49 |

3.84±0.77 |

4.20 |

0.00 |

|

|

Question12 |

4.40±0.78 |

3.92±0.85 |

2.93 |

0.04 |

|

(Figure 13)

From Figure 12 and Figure 13, it was found that "Question 3.5.6.7.8.9.11.12" have a significant difference and the mean score of the research group for each of these 8 items are higher than the control group, which means students hold a very positive attitude towards the AWE system, most of them they think this AWE can system is effective for improving their English writing and they will use the system in the future. Thus, by combing the data analysis findings of research question 1 and research question 2, we can refine the study results and conclude that the AWE system is very beneficial for improving students' English writing grades. Appendix Four shows the overall data tables obtained from the SPSS for research question 2.

5. Conclusion: As school technology coordinator to evaluate the AWE system

As school technology coordinators, we should actively promote the development of school ICT, integrate learning and technology, and make technological products better promote students' continued learning. Now we will conclude this research from three parts: 1) point out the limitations of the research and put forward solutions, 2) discuss the advantages and disadvantages of the AWE system, and then 3) explain what teachers need to do when using the AWE system.

Limitations of the research: Due to the time-consuming nature of the experimental subject (English writing) and the special circumstances of the epidemic, we reflected on three possible limitations of this research: 1) English writing takes a long time (almost 40 minutes for each task), and the school requires a safe distance between students, so all the writing tasks of the two groups were completed by their own time and space after class, we could not detect their writing process to ensure that no one was involved in plagiarism to infer the absolute truth of the scores in pretest and post-test. 2) The experimental group (AWE evaluation) students’ attitudes towards “ decrease the spelling errors” may inaccurate because, for some students, their computers have the word error correction function and word-assisted spelling function, which lead to the reduction of the spelling mistakes. However, this is the credit of the computer but does not mean that the students' vocabulary spelling ability has been improved; 3) The data of the survey given by the control group (teacher evaluation) may not be true enough. Although we have mentioned in the consent form that any evaluation and results of this research will not have any impact on the students' final exam scores, students inevitably worry that their negative evaluation will cause bad effect, so we cannot avoid that some students who may hold a negative attitude but deliberately change to a positive attitude.

Improvement for limitations: When conditions permit, 1) arrange two classrooms for the two groups of students. The students in the control group write in ordinary classrooms by hands, and students in the experimental group write in the school’s computer room by the same setting computers without any writing assistance equipment. No students can refer to any writing materials and each classroom is equipped with a teacher to supervise students; 2) Conduct this research after the final exam, so that the students will show a more realistic attitude in the survey. However, students are on vacation and most of them will go home after the final exam, so it is impossible to keep all the students in school to participate in the experiment. In general, we have used scientific statistics and analysis methods to ensure the reliability of the experimental data as much as possible. For these three limitations that may affect the experimental results, we will continue to find better solutions.

Effectiveness of the AWE system: Analyzing from the above research results, we can find that whether it is to accept this system or the teacher's evaluation, after a period of practice, students' English writing grades can be improved, but the AWE system has improved the students more obviously. Moreover, the students' attitude towards this AWE system is very optimistic, which means even if after a short period of intervention, this system can also help students improve their English writing grades.

Disadvantages of the AWE system: During the research process, we also received feedback from some experimental group students, pointing out that this system is relatively useless in judging whether the writing is off-topic, and this system is more useful in analyzing the structure of the writing, but whether the students are satisfied address all tasks to achieve the task response is not clear. Therefore, we can also realize the drawbacks of machine evaluation and the importance of real teachers.

Future application: In general, this AWE system can indeed improve students’ English writing ability, but there are also some disadvantages of machine work. We believe that when teachers use technology to assist teaching, they should carefully analyze its pros and cons and should not blindly refuse to use technology, nor can they blindly rely on technology. Instead, teachers should use technology from a critical perspective to optimize the affordances of technology for serving students' learning.

References

Becker, S., Bryman, A., & Ferguson, H. (Eds.). (2012). Understanding Research for Social Policy and Social Work 2E: Themes, Methods and Approaches. policy press.

Cohen, L., Manion, L., Morrison, K., Bell, R., Martin, S., McCulloch, G., & O'Sullivan, C. (2007). Research methods in education (Vol. 6, pp. 461-474). London: Routledge. Ericsson, P. F. (2006). The meaning of meaning. Machine scoring of human essays: Truth or consequences, 28-37.

Evans-Andris, M. (1995), “Barrier to computer integration: micro-interaction among computer coordinators and classroom teachers in elementary schools”, Journal of Research on Computing in Education, Vol. 28 No. 1, pp. 29-45.

Enright, M. K., & Quinlan, T. (2010). Complementing human judgment of essays written by English language learners with e-rater1 scoring. Language Testing, 27, 317–334.

Freedman, R., Ali, S. S., & McRoy, S. (2000). Links: what is an intelligent tutoring system?. intelligence, 11(3), 15-16.

He, KK., Li, WG. (2005) Educational Technology. Peking University Press, 245-247.

Hou, Y. (2020). Implications of AES System of Pigai for Self-regulated Learning. Theory and Practice in Language Studies, 10(3), 261-268.

IELTS British Council. (2020). IELTS official website for writing band descriptions. Retrieved from:

https://ielts.britishcouncil.org.hk/iorps/html/registration/selectExamTypeServlet.do?vEngine=.

Keith, T. Z. (2003). Validity of automated essay scoring systems. In M. D. Shermis & J. C. Burstein (Eds.), Automated essay scoring: A cross disciplinary perspective (pp. 147–167). Mahwah, New Jersey: Lawrence Erlbaum Associates.

Liu, S., & Onwuegbuzie, A. J. (2012). Chinese teachers’ work stress and their turnover intention. International Journal of Educational Research, 53, 160-170.

Pigai R & D Team. (2018). Pigai official publicity website. Retrieved from: http://www.pigai.org/index.php?c=us&id=5

Psotka, J., Massey, L. D., Mutter, S. A., & Brown, J. S. (Eds.). (1988). Intelligent tutoring systems: Lessons learned. Psychology Press.

Palermo, C., & Thomson, M. M. (2018). Teacher implementation of self-regulated strategy development with an automated writing evaluation system: Effects on the argumentative writing performance of middle school students. Contemporary Educational Psychology, 54, 255-270.

Strudler, N. B. (1995). The role of school-based technology coordinators as change agents in elementary school programs: A follow-up study. Journal of research on Computing in Education, 28(2), 234-257.

Sleeman, D., & Brown, J. S. (1982). Intelligent tutoring systems (pp. 345-pages). London: Academic Press.

Vantage Learning. (2006). Research summary: IntelliMetricTM scoring accuracy across genres and grade levels. Retrieved from http:// www.vantagelearning.com/docs/intellimetric/IM_ReseachSummary_IntelliMetric_Accuracy_Across_Genre_and_Grade_Levels.pdf

Warschauer, M., & Grimes, D. (2008). Automated writing evaluation in the classroom. Pedagogies: An International Journal, 3(1), 22-36.

Weigle, S. C. (2010). Validation of automated scores of TOEFL iBT tasks against non-test indicators of writing ability. Language Testing, 27, 335–353.

Yang, XQ, Dai YC. (2015). Practical research on the teaching model of college English autonomous writing based on Pigai. Audio-visual foreign language teaching, 3, 17-23.

Appendix One: Two Pilot Studies Results

Appendix Two: Latest IELTS writing band descriptions scale

Appendix Three: Overall Results for research question 1)

Appendix Four: Overall Results for research question 2)